Quality takes time and reduces quantity, so it makes you, in a sense, less efficient. The efficiency-optimized organization recognizes quality as its enemy. That’s why many corporate Quality Programs are really Quality Reduction Programs in disguise.

Slack: Getting Past Burnout, Busywork, and the Myth of Total Efficiency

This is how one of my favorite authors describes what leads to deprioritizing quality. I was wondering myself – what leads to it? What is making it difficult for organizations to recognize Quality? Why attempts to make everything efficient usually result in death to quality? Is the unicorn of achieving efficiency without loosing on quality possible?

The answer is yes, but there is some fine print to it.

The first time I have ever faced how quality can quickly deteriorate in an Agile world, was while being involved with company that thought it was religiously following The Lean Startup book. They were following ‘lean’ approach with metrics and testing products. But was also very quickly overwhelmed by pushing as many features as possible due to sales panicking on losing clients and not completing goals. You can imagine the look of the code. A monolith only one person from 40-strong engineering team knew how to deploy well. No automation. A lot of praying. Very little documentation. A lot of smart people too.

I have then learned of something called left-shifting quality. And the effort was not for engineering. The effort here was mainly for product discovery. I have learned a very valuable lesson here, that a lot of wasted effort and long hours of development can be saved, if there is someone who can manage expectations and set very clear and informed objectives, way before a single line of code is written.

This is also when I learned to respect engineers who can just say – No, I won’t do it, because I don’t understand it. Where is the value?

Again if one would want to optimize the time sales and marketing spends with product manager, sure they can! But then this effort is shifted right to the engineers. Engineers who can build anything, but without this initial discovery filter, they can’t build anything in the right time. You’d want to say, that first step to quality is sales and marketing knowing what exactly their clients need and not everything at once. (they have product managers to support them here in terms of value). The ability to say no is absolutely vital here.

But this was just the start of my journey with valuable lessons learned on left-shifting quality.

In another company I was faced with a completely different challenge. It also had a sizeable engineering department. This one had a relatively well functioning processes besides engineering. But once everything reached engineering, it was a mess of delays and miscommunications. The problem here was different. The engineers worked in a pool. They have had millions of existing product to manage, that was never properly maintained because they were just jumping from one project to another. Again a group of excellent and smart people.

I have recently read an excellent paper on code ownership. The problem became clear to me, once I read the paper, though I could not grasp it back when I was in the role at that company. The developers were not really owning the code. They could not understand it and each project was a new learning curve. This also meant all things developed in the past were poorly documented due to lack of time. On top, when these things failed people were just being taken out of their current work to swarm and fix it quickly or we will go under … bah humbug … clients will leave!

I became a believer then, that the second step to proper quality is strong code/product ownership by a dedicated engineering team.

You might not know you have ownership issues, if you have a few heroes in your organization, that make this problem less apparent. But wait … once the heroes leave, all your skeletons will start falling out off the closets. This is also highlighted in this study on open-source code ownership.

The results of ownership studies done on closed-source projects do not generalize to FLOSS projects. To understand the reasons for that, we performed an in-depth analysis and found that the lack of correlation between ownership metrics and module faults is due to the distributions of contributions among developers and the presence of “heroes” in FLOSS projects.

Source: EASE ’14: Proceedings of the 18th International Conference on Evaluation and Assessment in Software Engineering May 2014 Article No.: 39 Pages 1–9 https://doi.org/10.1145/2601248.2601283

This has taken me to start investigating what else could be a contributing factor to quality.

In one of my first jobs. I have built a simple enough application. A smaller company, fewer developers. The release process was stopping a server, copying a new file to ftp and restarting the server. All worked well until I had to go on holidays … and my team had to deploy a patch. Let’s now roll forward to a DB restore after they tried. A complete catastrophe. They have had no clue what to do and I were not keeping notes. I never thought to show them how to do it. 90% of the ‘easy’ process was in my head. FAIL!

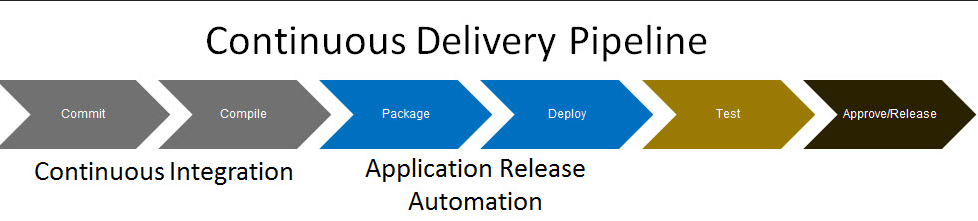

A lot of time had to pass until the newborn DevOps movement started to enlighten me on how to tackle these problems. There might be a lot of books in this area to mention, but one definitely worth reading, that had taught me the most at that time was Continuous Delivery: Reliable Software Releases through Build, Test, and Deployment Automation.

I have absolutely fallen in love with this approach the first time I used maven and svn! (I know … bleh … that svn is not trendy today, though it really was to a person that was mainly using clearcase before that!)

This would lead me to construct the next, third step to defining quality, which is being able to achieve repetitive results with minimal manual efforts.

This was not the end of the journey though.

I am not sure if any of the developers today had ever worked with vanilla javascript in the early days of web-2.0-development. Let’s say it was … a bit of pain. And not only because of the differences between browsers. We were wondering back then – do we really, really need to maintain all of this code?

To be fair, one would know a lot more about memory management, which loops are faster, etc back then. The question was – is it knowledge I really need, or just something I have to know? And … 12 years later we are up to 10 new js frameworks per week :).

Outsourcing complexity became the direction software had to move towards if products were to remain competitive. This has only strengthened once cloud computing came into the picture. One can wonder if things like ‘well architected frameworks’ are just sales and marketing stories, trying to convince developers to build things this way not that way, we need to sell more. Still the complexity your direct team manages is becoming less and less. This means you can spend more time on other things.

Days of most engineers understanding all the guts of their software are days of the past. You are still encouraged to learn more, though remember most companies also need to earn money to pay your salary. They do so by staying competitive and high quality. The in-house software movement is passe whether we like it or not. In words of the excellent people behind the book Accelerate: The Science of Lean Software and DevOps: Building and Scaling High Performing Technology Organizations:

Low performers use more proprietary software than high and elite performers: The cost to maintain and support proprietary software can be prohibitive, prompting high and elite performers to use open source solutions. This is in line with results from previous reports. In fact, the 2018 Accelerate State of DevOps Report found that elite performers were 1.75 times more likely to make extensive use of open source components, libraries, and platforms.

Source: State of DevOps 2019 report

Looks like the fourth quality factor is the ability of the organization to outsource complexity.

And lastly, something of a very recent lesson learnt. I have mentioned The Lean Startup approach at the beginning of this article, it has hinted at the last quality component.

Metrics.

My first ever metrics created for quality was number of defects detected in production. This was a good discussion tool, but was missing out something. I have subsequently supplemented it with code coverage and others. They were sounding a bit subjective to me. This was until I have discovered The DORA Metrics.

Source: State of DevOps 2019 report

To quote myself :):

Not measuring things reliably is like not knowing how much money you have, just because you are afraid to look up your balance sheet. Or worse! Purposely hiding your balance sheet. This is why [I like to] employ well researched, engineering-focused DORA metrics, so that our results can be reasonably judged and we review these results daily, to achieve success.

Source: https://www.linkedin.com/pulse/communitea-gustaw-fit-adele-lewis/?trackingId=M0OmAGHRQx6jDG5MOpUztQ%3D%3D

The last, fifth process rule for achieving quality is measuring the right things.

The story comes to an end here. I would like to highlight that on top of all the rules of the war, there is always the open, flexible and accepting company culture. Nothing and no amount of process or improvements will replace having the right people.

The efficiencies you gain, are actually outsourced to automation, more work for client teams or you can outsource your complexity. The effort does not disappear!

This post is also slightly opinionated and based strictly on my experiences. Apologies if my views came out as too aggressive. If anything, I really encourage a discussion – I will make every effort to respond to each comment!

Wrapping up. My five components of the software quality are:

- sales and marketing knowing what exactly their clients need and not everything at once

- strong code/product ownership by a dedicated engineering team

- being able to achieve repetitive results with minimal manual efforts

- the ability of the organization to outsource complexity

- measuring the right things

This should all be supported by the right company culture and obviously adapting based on the lessons learned.

Thank you and read you in the next article!

Leave a comment